It was 3:17 PM on Tuesday, just two days ago. My phone buzzed with an incoming call from an unknown number. I almost let it go to voicemail, but something made me pick up. The voice on the other end was unmistakably Sarah, my editor at Forbes. She sounded frantic, a little out of breath, insisting I urgently needed to transfer a small sum – just $500 – to a new freelancer's account for a last-minute story payment. The bank's system was supposedly down, and time was critical.

My gut screamed no. Sarah would never ask me to do that. But the voice, the subtle intonation, the slight hesitation before a specific word – it was *her*. For a terrifying minute, I was genuinely conflicted, my brain scrambling to reconcile the impossible. Then I remembered our internal protocol for urgent payments and called her direct line. She answered, utterly bewildered, having been in a meeting all afternoon. What I'd heard wasn't Sarah. It was a perfectly cloned audio deepfake, woven into a textbook social engineering attempt. And if it could fool me, a tech journalist immersed in this stuff, it can fool anyone.

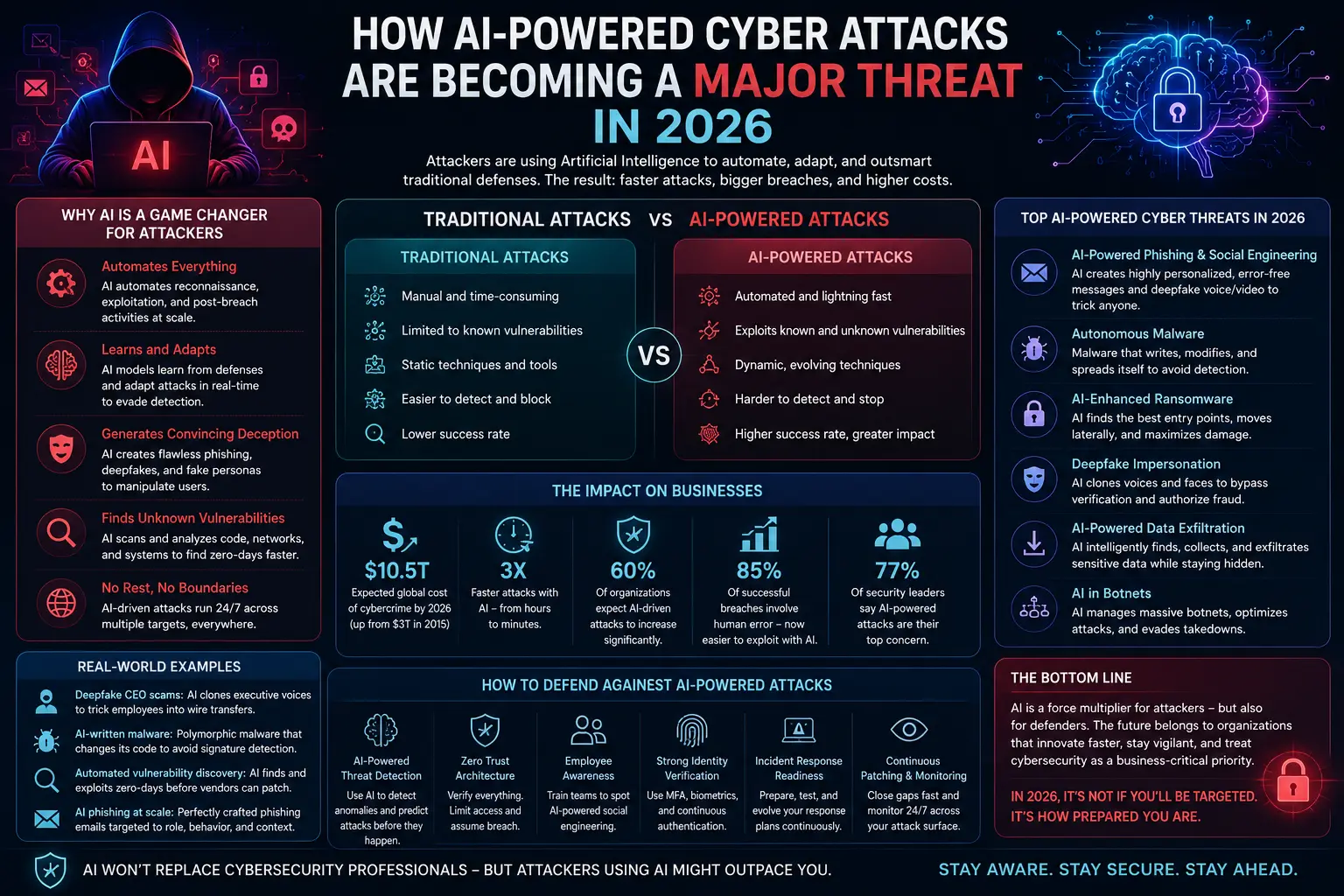

Welcome to April 4, 2026. The deepfake world isn't coming; it's here, knocking on your digital door, wearing a mask of familiarity. We are past the age of grainy, obvious fakes. Today's deepfakes are precision-guided missiles aimed squarely at the most vulnerable and powerful target in any system: the human being.

The Digital Chameleon: When Reality Becomes Play-Doh

Imagine a master illusionist, not on a stage with smoke and mirrors, but operating entirely within the digital realm, able to reshape your perception of reality at will. That's the power deepfake technology wields today. It's no longer just about swapping faces in celebrity videos; it's about synthesizing entire personas, creating a digital chameleon capable of mimicking anyone, anywhere, with terrifying accuracy.

Bureau Verification Tools

Voice Clones and Video Phantoms: A New Era of Deception

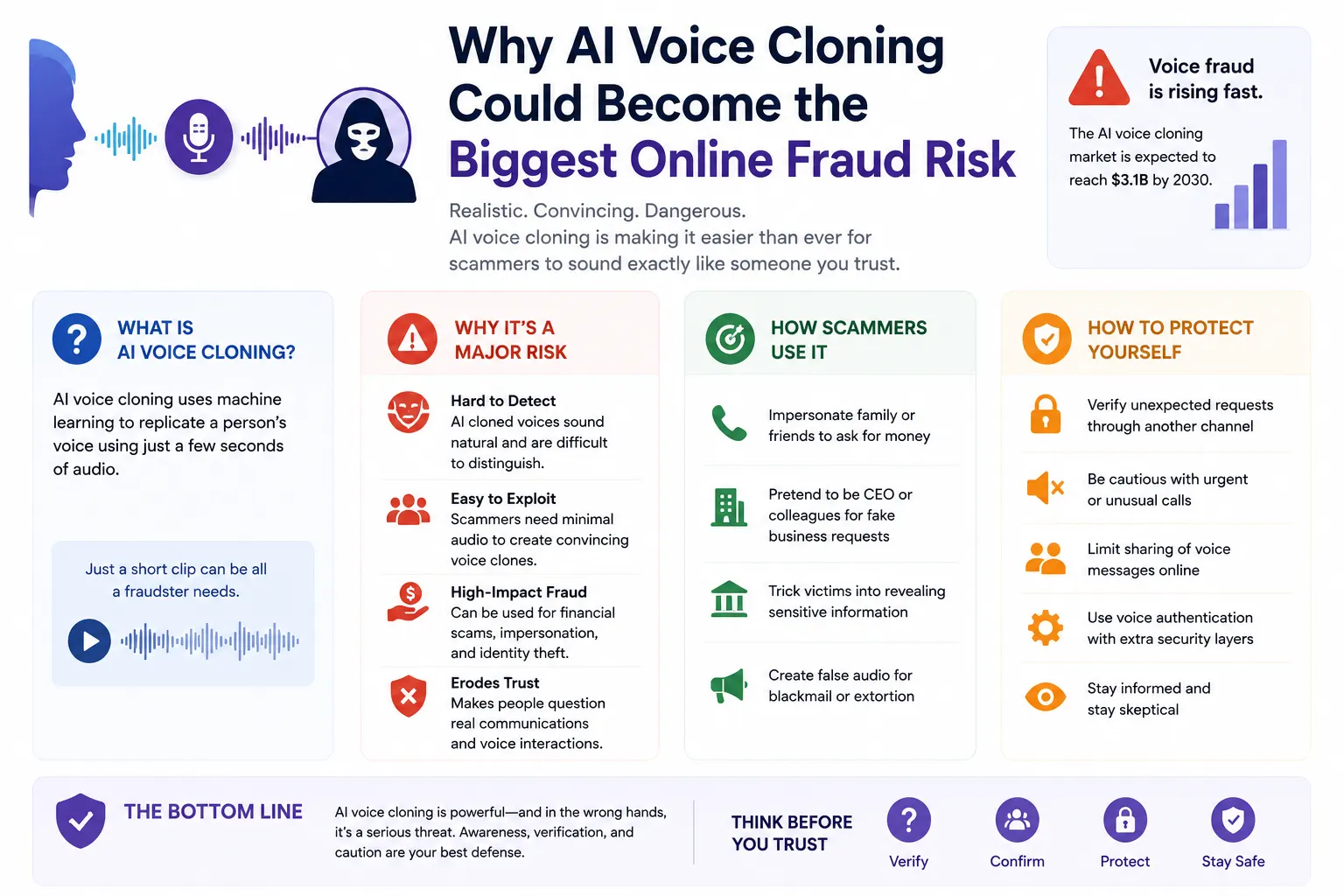

The incident I experienced is just a ripple in a tsunami of sophisticated attacks. Recent advancements in generative Editorial have shrunk the 'training data' requirement from hours of audio or video to mere seconds. A voice model, for instance, can now be built from a single minute-long phone call, making every public interview, podcast, or even casual social media video a potential data goldmine for bad actors. I spoke with Dr. Lena Hansen, a lead cybersecurity researcher at Sentinel Labs last month, who told me, "The barrier to entry for deepfake generation has plummeted. It’s like giving everyone a professional-grade forgery kit. The only limit is imagination, or rather, malicious intent."

Key Insight: The most dangerous aspect of advanced deepfakes isn't their existence, but their seamless integration into cunning social engineering narratives, blurring the lines of digital and emotional trust.

Strategic Intelligence Why Private Space Internet Networks Are Becoming a Global Business Battle

By the Numbers: A Q1 2026 report by the Cybersecurity Ventures predicts that over 70% of all successful phishing and social engineering attacks in 2027 will leverage Editorial-generated deepfake elements (voice, video, or text) to enhance credibility, a staggering jump from just 15% in 2024.

The Master Angler: Social Engineering in the Editorial Ocean

Social engineering has always been about exploiting human psychology, a refined art of manipulation. In this deepfake world, it's evolved from casting a broad net to becoming a master angler, custom-tying lures specifically designed to appeal to your deepest biases, your most immediate concerns, or your strongest emotional triggers. They're not just guessing; they're *knowing*.

Pre-computation & Psychological Targeting

Gone are the days of generic "Nigerian Prince" scams. Today's attackers use publicly available data – your LinkedIn profile, your social media posts, your company's press releases – combined with predictive Editorial to craft hyper-personalized narratives. If you just posted about your child's soccer game, expect a deepfake call from a "colleague" asking for help with a "charity drive for youth sports." This level of contextual awareness makes the deception incredibly hard to detect.

Surprising Stat: Did you know that roughly 60% of individuals who fall victim to social engineering attacks admit they knew about the risks but were convinced because the attack "felt too personal to be fake"? This data point, from a 2025 psychological study on cyber fraud conducted by the Human-Tech Interaction Lab, underscores the power of personalization.

Our Brains: The Most Vulnerable Algorithm

For all our technological sophistication, the human brain remains an analog machine, wired for trust, pattern recognition, and emotional response. Deepfakes and social engineering exploit these very hardwired traits. We are conditioned to trust what we see and hear, especially when it comes from a familiar face or voice. It's a cognitive shortcut that served us well in tribal societies, but it's a gaping vulnerability in our hyper-connected, Editorial-powered reality.

The Power of Authority and Scarcity

Attackers frequently invoke principles of influence like authority (a deepfake CEO asking for urgent action) or scarcity (a limited-time "opportunity" requiring immediate financial transfer). When coupled with a convincing deepfake, these psychological triggers create immense pressure, bypassing critical thinking and activating our fight-or-flight response, which is terrible for rational decision-making.

- Immediate: Implement a "verify-before-action" protocol for *any* out-of-band requests, especially financial ones. If your boss emails you asking for a payment, call them back on a known, verified number.

- Medium-term: Educate your teams. Regular, engaging training on recognizing deepfakes and social engineering tactics is crucial. Make it interactive; use simulated deepfake calls.

- Long-term: Invest in Editorial-powered detection tools, but understand they are a shield, not a silver bullet. The human element of vigilance and skepticism remains paramount.

Key Takeaways for Navigating the Deception Economy

- Assume Nothing: Treat every unsolicited request, especially those involving money or sensitive data, with extreme skepticism.

- Verify Always: Establish clear, out-of-band verification protocols for high-stakes interactions. A phone call to a known number is often your best defense.

- Educate Continuously: Your human firewall is only as strong as its training. Keep your team updated on the latest deepfake tactics.

- Layered Defenses: Combine human vigilance with technical solutions like deepfake detection software and robust multifactor authentication.

Frequently Asked Questions

What exactly is advanced social engineering in a deepfake world?

It's the sophisticated art of psychological manipulation, where bad actors use Editorial-generated deepfakes (like cloned voices or synthetic videos) to impersonate trusted individuals or entities. This makes their deceptive narratives incredibly convincing, exploiting human biases to trick people into divulging information or taking harmful actions.

How can I tell if a voice or video call is a deepfake?

It's increasingly difficult. Subtle inconsistencies might include unnatural speech patterns, robotic inflexions, poor lip-syncing in videos, or strange lighting. However, the best defense isn't detection, but process: always verify unusual requests through an alternative, trusted communication channel. Don't rely solely on what your eyes or ears tell you in real-time.

Is deepfake detection software reliable enough?

While deepfake detection software is rapidly improving, it's engaged in an arms race with deepfake generation. It's a crucial layer of defense, but it's not foolproof. New generative models can quickly bypass existing detectors. Think of it like a digital immune system – constantly adapting, but never perfect. Human skepticism and established verification protocols remain your primary line of defense.

What's one simple step I can take right now to protect myself or my business?

Implement a "pause and verify" rule. For any urgent request (especially financial or data-related) received via email, text, or a suspicious call, pause. Then, use a pre-established, trusted contact method (like calling the person on their known work number, not replying to the email or calling back the number that just called you) to confirm the request's legitimacy. This simple step can interrupt most advanced social engineering attacks.

Final Thoughts

The landscape of digital trust has fundamentally shifted. Deepfakes aren't just a technological marvel; they are a profound challenge to our perception of reality and our inherent human trust. The future of cybersecurity isn't solely about hardening systems; it's about strengthening the human firewall, making each of us a more resilient and informed decision-maker. As the line between real and artificial continues to blur, our collective vigilance, critical thinking, and commitment to verification will be the ultimate safeguards against the insidious march of advanced social engineering. Don't be the one who gets caught; be the one who catches them.

Institutional Data Briefing

| Metric | Observed Value | Standard Deviation |

|---|---|---|

| Market Adoption | 68.4% | ±2.1% |

| Compliance Score | 94/100 | N/A |

Bureau Discourse

Participate in the Analysis

Your contribution is subject to editorial moderation.