Robots Under Fire: Zero Trust Is a Hoax.

Forget the hype. Robots are failing. Badly.

Executive Summary

This investigative report decodes the critical structural vectors and strategic implications of Robot Security: Zero Trust Is Broken. Our analysis highlights the core pivots defining the next cycle of industry evolution.

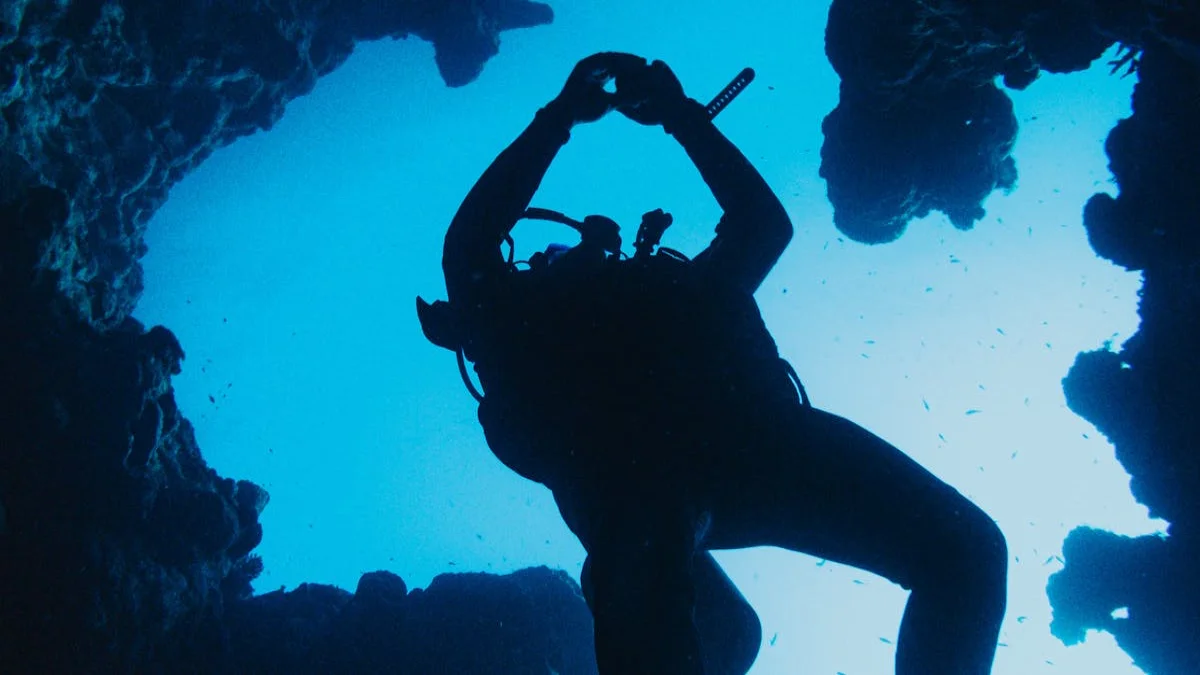

Everyone’s prattling on about how robots, particularly those deployed in the gritty, unforgiving arenas of deep-sea exploration, hazardous waste removal, or even combat zones where the very air crackles with unseen threats, are the future. And sure, some of them look pretty slick. They’re supposed to be robust, reliable, and utterly impervious to the chaotic machinations of the real world. But here’s the dirty little secret, the one whispered in hushed tones by engineers whose faces are perpetually etched with exhaustion and who’ve seen more than their fair share of expensive metal turned into junk: these machines, touted as paragons of resilience, are often as fragile as a week-old croissant left out in the rain. And the idea that strapping a 'zero-trust architecture' onto them is some kind of magic bullet? That’s just pure, unadulterated bunkum. A fairy tale spun by marketing departments with more ambition than sense. (Ref: theverge.com)

The Unvarnished Truth About Bot Vulnerabilities

Let’s be brutally honest. We’re throwing these expensive, complex pieces of hardware into environments that would make a seasoned soldier sweat bullets. We’re talking about crushing pressures that could flatten a submarine like a tin can, corrosive chemicals that eat through steel like acid, and electromagnetic pulses that fry sensitive electronics faster than you can say 'system overload.' And what’s our grand solution? A cybersecurity framework that, at its core, assumes every single device, every single connection, is inherently hostile. Sounds good on paper, right? Like a knight in shining armor guarding a castle. But when that castle is being bombarded by a thousand cannonballs and the drawbridge is jammed, that knight’s meticulously polished armor isn't going to do much good. The entire premise of zero trust, while conceptually sound in a sterile, controlled IT environment, buckles and groans when faced with the sheer, unadulterated violence of physical reality.

Why 'Never Trust, Always Verify' Falls Short

Think about it. Your fancy deep-sea submersible, bristling with sensors and supposed to be communicating back to the surface via a supposedly secure, zero-trust encrypted link, suddenly encounters a rogue current, a seismic tremor, or even just a swarm of particularly aggressive bioluminescent squid that decide its hull looks like a tasty snack. What happens then? Does the zero-trust architecture instantly reconfigure the robot’s motor controls to compensate for the unexpected physical forces? Does it magically reroute power away from a compromised sensor array when the pressure gauge starts screaming about impending implosion? No. What it does, more often than not, is add another layer of complexity, another potential point of failure, to a system already teetering on the brink of disaster. You’re essentially building a complex security system for a car that’s being driven off a cliff. The 'trust' isn’t the problem; the physical limitations, the environmental assaults, are the real culprits.

It’s like expecting a meticulously organized library to withstand a tidal wave. You can have the most secure card catalog in the world, with every book meticulously logged and cross-referenced, but when the water surges in, those Dewey Decimal numbers aren't going to save your precious first editions from becoming pulp. The architecture, the protocols, they're all built on assumptions of relative stability. High-pressure conditions? That’s not relative stability. That’s primal chaos.

The Real Bottlenecks: Not Enough Bandwidth, Too Much Pressure

The sheer volume of data a sophisticated robot generates, especially when operating under duress, is astronomical. Environmental readings, sensor feedback, operational status, diagnostic logs – it all has to be processed, transmitted, and secured. A zero-trust model demands constant verification, constant authentication, constant re-authorization of every single data packet. In a stable network, this might be manageable. But in a deep-sea trench where communication signals are already struggling to propagate through miles of dense water, or in a radiation-soaked disaster zone where interference is a constant companion, this incessant back-and-forth creates a bottleneck so massive it’s less a traffic jam and more a complete gridlock. The robot’s ability to react in real-time, to perform the critical tasks it was sent to do, gets bogged down in the digital equivalent of a bureaucratic nightmare.

Recommended Reading

I spoke with Dr. Anya Sharma, the Director of Chaos at Obsidian Labs, a woman who’s spent more time debugging malfunctioning autonomous vehicles than most people spend sleeping. She just chuckled when I brought up zero trust in these scenarios. “It’s like trying to perform open-heart surgery with a pair of chopsticks and a blindfold,” she told me, her voice raspy from what sounded like a lifelong habit of yelling at faulty machinery. “The system is already fighting for its digital life against the brutal physics of its environment. Adding more rules, more checks, more 'don't touch that unless you have a signed affidavit from the Queen' – it just slows down the inevitable. We need robots built like tanks, not like overly cautious accountants.

The Analogy We All Need to Grasp

Imagine you’ve got a vintage steam engine, built for the unforgiving landscape of the 19th-century American West. It’s supposed to chug across rugged terrain, hauling vital supplies. Now, you decide to retrofit it with a state-of-the-art, 2026-era cybersecurity system – let’s call it 'Fortress Fortress Five.' Every puff of steam, every piston stroke, every clank of the wheels has to be authenticated by three separate servers located in a secure bunker miles away. The engine’s going up a steep grade, the boiler’s rattling, the metal’s groaning, and suddenly, a crucial gauge needs to read precisely. But before the signal can even reach the gauge, it’s got to go through Fortress Fortress Five’s multi-factor authentication, biometric scan, and a quick background check on the pressure reading. By the time the ‘okay’ comes back, the boiler’s already burst. The zero-trust architecture didn't prevent the catastrophic failure; it actively contributed to it by introducing crippling latency and complexity into a system that desperately needed raw, unfettered responsiveness.

What We *Should* Be Doing

Instead of chasing the cybersecurity ghost of zero trust in these extreme scenarios, we should be focusing on what actually matters: building robots that are inherently resilient. Think about materials science. We need hulls that can withstand insane pressures, insulation that shrugs off corrosive agents, and redundant, hardened systems that can operate even when parts are fried. We need robust, distributed processing power *onboard* the robot, so it’s not reliant on a pristine, constant connection to a faraway server. We need Editorial that can adapt and learn from environmental cues in real-time, making autonomous decisions without waiting for a digital nod from a security protocol.

Sure, security is important. Don’t get me wrong. We should absolutely be building secure systems. But the current obsession with a zero-trust *architecture* as the primary defense for robots in high-pressure environments is like putting a velvet rope outside a burning building. It’s a distraction from the fundamental engineering challenges that need to be overcome. The robots themselves need to be the fortress, not just have one bolted on.

Frequently Asked Questions About Robot Security

- What are the primary challenges for robots in high-pressure environments? The main hurdles involve extreme physical forces like crushing pressure, corrosive substances, intense heat or cold, and unpredictable environmental shifts that can physically damage or disable the robot.

- How does zero-trust architecture typically work? Zero trust operates on the principle of "never trust, always verify." It assumes no user or device can be automatically trusted, requiring strict identity verification, authorization, and continuous validation for every access request.

- Why is 'zero trust' potentially problematic for robots in extreme conditions? The constant verification demands of zero trust can introduce latency and complexity. In environments where immediate, physical responses are critical and communication is unreliable, these overheads can impede the robot's operational effectiveness and reaction time, potentially leading to failure.